In the dazzling glow of artificial intelligence’s rapid ascent, a persistent and often overlooked shadow grows ever larger. We celebrate AI’s triumphs from diagnosing diseases with superhuman accuracy to generating art and prose in seconds. We integrate it into our search engines, our social media feeds, and our personal assistants. The narrative is one of boundless progress and limitless potential. Yet, behind every sophisticated chatbot, every nuanced recommendation algorithm, and every self-predicting model lies a voracious consumption of energy and computational resources, creating a significant and escalating environmental crisis. This is not a peripheral issue but a central challenge that threatens to undermine the very sustainability of our digital future. The era of ignoring AI’s digital carbon footprint is over; we are now faced with the urgent task of understanding, measuring, and mitigating its impact on our planet.

This extensive exploration delves into the multifaceted environmental cost of AI, moving beyond the headlines to examine the full lifecycle of its energy consumption, the tangible consequences for our ecosystems, and the pioneering solutions that could pave the way for a greener, more responsible AI revolution.

A. Deconstructing the AI Energy Black Hole: Where the Watts Go

To comprehend the scale of AI’s environmental impact, one must first understand where and how it consumes such colossal amounts of energy. It is not a single, monolithic drain but a cascade of energy-intensive processes spanning its entire existence.

A. The Model Training Frenzy: The Initial Energy Surge

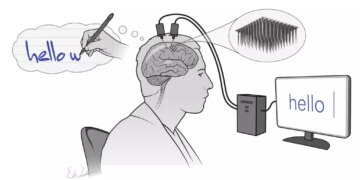

The creation of a state-of-the-art AI model, particularly large language models (LLMs) like GPT-4 or its successors, is analogous to a massive industrial undertaking. The training phase is the most computationally demanding part of the lifecycle. This process involves feeding terabytes, sometimes petabytes, of data into a neural network with billions, or even trillions, of parameters. The model repeatedly processes this data, adjusting its internal parameters minutely with each iteration to minimize errors and improve accuracy.

This requires weeks or months of non-stop computation on clusters of high-performance GPUs (Graphics Processing Units) or specialized TPUs (Tensor Processing Units). These processors, while efficient for parallel computation, draw immense power and generate enormous heat, necessitating powerful cooling systems. A landmark study from the University of Massachusetts, Amherst, found that training a single large AI model can emit over 626,000 pounds of carbon dioxide equivalent nearly five times the lifetime emissions of an average American car, including its manufacturing. This is the foundational carbon debt incurred before the model even answers its first query or generates its first line of code.

B. The Inference Epidemic: The Perpetual Energy Drain

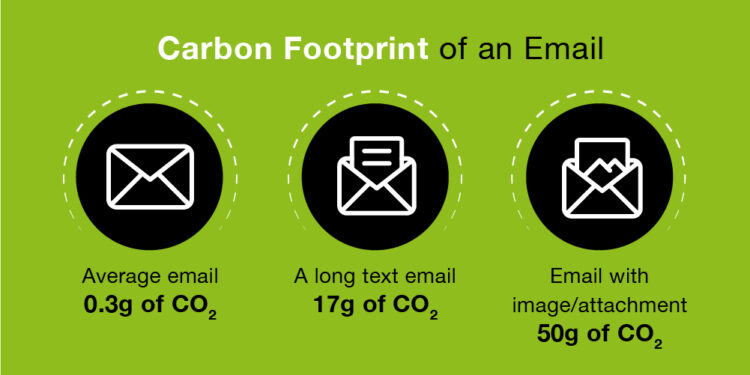

While training is a massive, one-off (or periodic) energy spike, the real sustainability challenge lies in the inference phase. Inference is the process of using a trained model to make predictions or generate outputs. Every time you ask a question to a smart speaker, use a real-time translation app, or scroll through a social media feed curated by an AI, you are triggering an inference event.

The critical point is scale. A model like Google’s BERT now handles billions of search queries every single day. Each query, while individually minuscule in its energy cost, collectively represents a continuous, global energy drain that far surpasses the one-time cost of training. As AI becomes more deeply embedded in every digital interaction—from optimizing logistics routes to filtering spam emails this “inference epidemic” becomes the primary and most persistent source of AI’s carbon footprint. It’s a death by a thousand cuts, where the cumulative impact of trillions of tiny computations creates a massive environmental burden.

C. The Data Center Ecosystem: The Engine Room’s Hunger

The hardware that powers this digital brain resides in data centers, which are the physical embodiment of AI’s energy appetite. These are not mere server rooms but industrial-scale facilities that consume power for two primary functions:

-

Computational Power: The electricity required to run the CPUs, GPUs, and TPUs at full throttle.

-

Cooling Overhead: The significant energy needed to remove the waste heat generated by this computation. Without powerful air conditioning, liquid cooling systems, and vast ventilation networks, the hardware would quickly overheat and fail.

Globally, data centers already account for about 1-2% of worldwide electricity demand, a percentage that is poised to grow sharply with the AI boom. Tech giants like Google, Microsoft, and Amazon are constantly building new facilities, often in regions with cheap but not always clean energy sources. The location of a data center is a major determinant of its carbon footprint; one powered by coal-fired plants is orders of magnitude more polluting than one powered by geothermal or solar energy.

D. The Hardware Lifecycle: From Mine to Landfill

The environmental cost of AI extends beyond electricity consumption. The lifecycle of the specialized hardware itself is a source of significant ecological degradation.

-

Resource-Intensive Manufacturing: The production of GPUs and TPUs requires the mining of rare earth minerals like coltan, cobalt, and lithium. These mining operations are often associated with habitat destruction, water pollution, and significant carbon emissions.

-

Short Lifespan and E-Waste: The relentless pace of AI innovation leads to rapid hardware obsolescence. Older chips, unable to handle the latest massive models, are decommissioned every few years, contributing to the world’s growing electronic waste (e-waste) problem. Properly recycling this complex hardware is challenging and often not done efficiently, leading to toxic materials leaching into soil and groundwater.

B. Quantifying the Crisis: The Tangible Impacts of an Intangible Technology

![]()

The abstract concept of “energy consumption” translates into very real-world environmental consequences. The AI carbon footprint is not a future threat; it is a present-day reality with measurable effects.

A. Greenhouse Gas Emissions and Climate Change

The most direct impact is the emission of greenhouse gases, primarily CO2, from the electricity generation required to power data centers. As previously established, the training of a single model can emit CO2 at a scale comparable to multiple transatlantic flights. When multiplied by the thousands of models being developed and the trillions of daily inferences, the cumulative contribution to atmospheric CO2 becomes substantial. This exacerbates global warming, leading to more frequent and severe weather events, rising sea levels, and ecosystem collapse. The irony is stark: we are using a technology that promises to solve complex human problems, yet in doing so, we may be accelerating one of the most complex problems of all climate change.

B. Water Footprint: The Thirsty Servers

A less publicized but equally critical impact is AI’s massive water footprint. Large data centers rely on vast quantities of water for cooling, often operating powerful evaporative cooling towers. A 2023 report revealed that Microsoft’s global water consumption spiked by 34% from 2021 to 2022, a surge largely attributed to its AI-related computing infrastructure. In one example, Microsoft’s data center in Iowa, used to train OpenAI models, consumed enough water to fill over 900 Olympic-sized swimming pools. This consumption places immense strain on local water resources, especially in arid regions where these facilities are often built for access to cheap land and power. This can lead to water scarcity for local communities and agriculture, creating a direct conflict between technological advancement and basic human needs.

C. Broader Ecological Disruption

The chain of impact extends further. The mining for hardware components ravages local ecosystems, leading to deforestation, soil erosion, and biodiversity loss. The disposal of e-waste, if not managed with the highest standards, contaminates land and water with heavy metals like lead, mercury, and cadmium. Furthermore, the massive energy demand can drive the expansion of fossil fuel infrastructure, perpetuating a cycle of pollution and environmental degradation.

C. Pathways to a Sustainable AI Future: From Problem to Solution

Acknowledging the severity of the problem is only the first step. The crucial next phase is action. A multi-pronged strategy, involving technological innovation, algorithmic efficiency, and corporate accountability, is essential to steer AI toward a sustainable path.

A. The Green Energy Imperative

The single most impactful intervention is to power data centers with 100% renewable energy. Tech giants have made progress here, with Google, Microsoft, and Amazon all announcing ambitious “carbon-neutral” or “carbon-negative” goals. This involves:

-

Power Purchase Agreements (PPAs): Contracting directly with wind and solar farms to add clean energy to the grid.

-

On-site Renewable Generation: Installing solar panels and wind turbines at data center locations.

-

Investing in Advanced Nuclear and Geothermal: Exploring next-generation baseload clean energy sources to ensure reliability when the sun isn’t shining and the wind isn’t blowing.

B. The Algorithmic Efficiency Revolution

We must do more with less. The field of “Green AI” is focused on designing more computationally efficient models. Key approaches include:

-

Model Pruning and Quantization: Removing redundant parameters from a neural network (pruning) and using lower-precision arithmetic for calculations (quantization) can dramatically reduce a model’s size and computational needs with minimal loss of performance.

-

Knowledge Distillation: Training a small, efficient “student” model to mimic the behavior of a large, cumbersome “teacher” model, effectively compressing the AI’s knowledge.

-

Efficient Architecture Search: Using automated methods to design neural network architectures that are inherently more efficient from the ground up, such as Transformer variants that require less memory and processing power.

C. Smarter Hardware and Data Center Design

The physical infrastructure must evolve. Innovations include:

-

Specialized AI Chips: The development of application-specific integrated circuits (ASICs) and TPUs that are designed specifically for AI workloads, performing more computations per watt of energy than general-purpose CPUs and GPUs.

-

Advanced Cooling Techniques: Moving beyond traditional air conditioning to more efficient methods like liquid immersion cooling, where servers are submerged in a non-conductive fluid that absorbs heat far more effectively.

-

Strategic Data Center Placement: Locating new data centers in naturally cold climates to reduce cooling needs, or near sources of abundant geothermal or hydroelectric power.

D. Cultivating a Culture of Transparency and Reporting

Currently, there is no standardized way to report the environmental cost of AI. Mandating and normalizing this is critical.

-

Carbon Accounting for AI: Companies should be required to calculate and disclose the full lifecycle carbon emissions of training and operating their major AI models, similar to financial reporting.

-

Leaderboards and Benchmarks: The research community should establish benchmarks that reward not just model accuracy, but also computational and energy efficiency. A model that achieves 99% accuracy with 10% of the energy is a far greater achievement.

E. Regulatory and Economic Levers

Governments and international bodies have a role to play in creating a framework that incentivizes sustainability.

-

Carbon Pricing: Implementing a carbon tax would internalize the environmental cost of AI development, making energy-inefficient practices more expensive and green alternatives more financially attractive.

-

Efficiency Standards: Setting mandatory energy performance standards for data centers and AI hardware, pushing the industry toward continuous improvement.

-

Funding for Green AI Research: Directing public research grants towards projects that explicitly aim to reduce the environmental impact of machine learning.

Conclusion: The Choice for an Intelligent and Sustainable Future

The rise of artificial intelligence represents one of the most transformative periods in human history. Its potential to drive scientific discovery, enhance human creativity, and solve pressing global challenges is undeniable. However, this potential cannot be realized if the technology is built on a foundation of environmental degradation and unsustainable resource consumption. The hidden cost of AI is no longer hidden; it is a bill that is coming due.

The path forward requires a fundamental shift in mindset. The race to build the biggest, most powerful model must be tempered by a parallel race to build the smartest, most efficient, and most sustainable one. It is a collective responsibility shared by researchers, engineers, corporate leaders, policymakers, and consumers. By demanding transparency, championing efficiency, and insisting on renewable energy, we can harness the incredible power of AI not as a force that contributes to planetary decline, but as a vital tool in the mission to build a healthier, more equitable, and sustainable world. The intelligence we create must be matched by the wisdom with which we deploy it.