For centuries, the primary method for translating the intricate, lightning-fast tapestry of human thought into communicable language has been a physical, mechanical process. From the quill on parchment to the clack of typewriter keys and the soft tap-tap-tapping on modern touchscreens, our means of expression have been bottlenecked by the speed and dexterity of our muscles. This fundamental paradigm, however, is on the verge of a revolution so profound it challenges the very definition of communication. Brain-interface typing, the direct digital conduit between the human brain and a computer, is not merely a new tool; it is the nascent beginning of a symbiotic relationship between our biological consciousness and the digital realm. This technology promises to dismantle the barriers between thought and action, offering a future where our minds can compose emails, create art, and control our digital environments as effortlessly as we currently form an idea. This article delves deep into the mechanics, implications, and future trajectory of this groundbreaking digital revolution.

A. Deconstructing the Miracle: How Brain-Interface Typing Actually Works

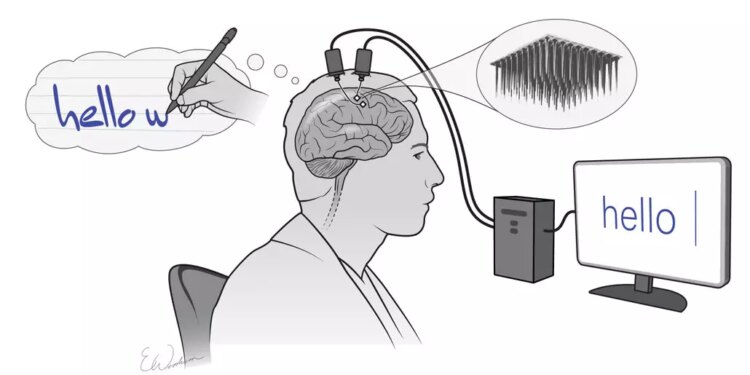

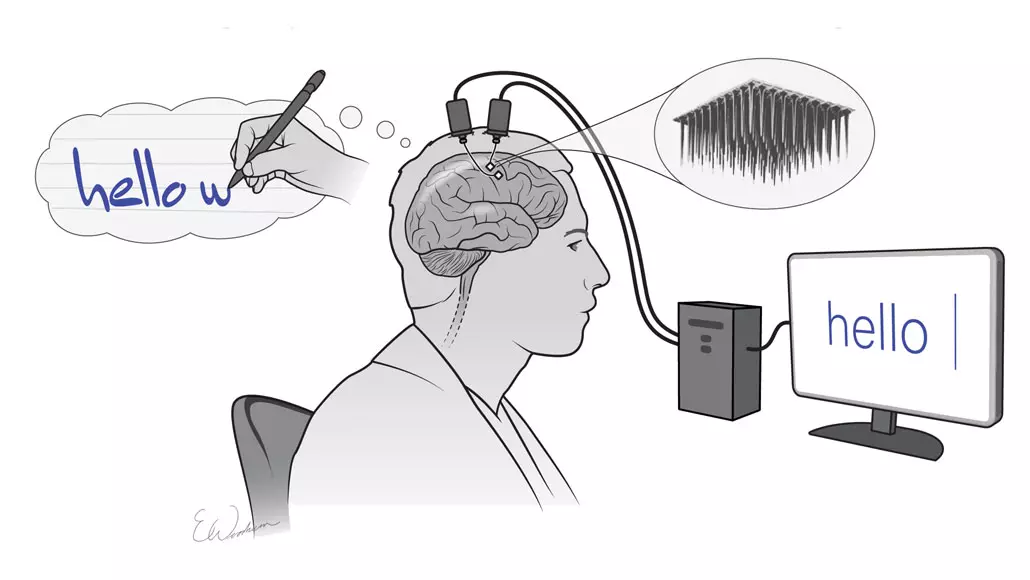

The concept of “thinking to text” seems like science fiction, but its operation is grounded in the measurable principles of neuroscience and sophisticated signal processing. It’s a multi-stage digital pipeline that transforms analog brain activity into discrete digital commands.

A.1. The Signal Acquisition Phase: Listening to the Neural Chorus

The first and most critical step is capturing the brain’s electrical symphony. This is achieved through various technologies, each with its own trade-offs between invasiveness, resolution, and practicality.

-

Non-Invasive Electroencephalography (EEG): This is the most common and consumer-friendly approach. A cap or headset fitted with electrodes is placed on the scalp. These electrodes detect the minute electrical fluctuations produced by the synchronized firing of millions of neurons beneath the skull. While EEG is safe and portable, its major limitation is spatial resolution. The signals are filtered and blurred by the skull and scalp, making it difficult to pinpoint the exact origin of fine-grained neural activity. It’s like listening to a full orchestra from outside the concert hall you can hear the music, but isolating a single violin is challenging.

-

Invasive and Semi-Invasive Methods: For higher-fidelity control, researchers turn to more direct interfaces.

-

Electrocorticography (ECoG) involves placing a grid of electrodes directly on the surface of the brain, beneath the skull. This provides a much clearer and more powerful signal than EEG because it bypasses the signal-degrading layers of bone and skin.

-

Intracortical Microelectrode Arrays represent the most invasive and highest-resolution option. These are tiny chips with dozens to hundreds of microscopic needles that penetrate the brain tissue to record the activity of individual neurons or small neural clusters. This offers an unparalleled view into the brain’s communication network.

-

A.2. The Signal Processing and Translation Phase: From Noise to Meaning

The raw neural data, often drowned in biological and environmental “noise,” is useless without sophisticated interpretation. This is where machine learning and artificial intelligence perform their magic.

-

Feature Extraction: The software first sifts through the massive, chaotic stream of data to identify meaningful patterns or “features.” These could be specific changes in signal power within certain frequency bands (like Mu or Beta rhythms) or the appearance of well-researched brain signals like the P300 potential, a spike in activity that occurs about 300 milliseconds after a person recognizes a rare or significant stimulus.

-

Algorithmic Translation: This is the core of the system. Powerful machine learning models, often deep neural networks, are trained on vast datasets that correlate specific brain patterns with intended actions. For example, in a visual speller system, the algorithm learns to recognize the P300 signal generated when the user focuses on a desired letter flashing on a screen. In more advanced motor imagery systems, the algorithm is trained to decode the brain’s motor commands the same signals that would be sent to your hand to move a cursor or type a key directly from the neural activity, without any physical movement.

A.3. The Output and Feedback Phase: Closing the Digital Loop

The final step is the execution of the translated command and the provision of feedback to the user. The algorithm’s output a predicted letter, word, or cursor movement is sent to the computer, which displays it on the screen. This visual feedback is crucial. It creates a closed-loop system where the user sees the result of their mental effort and subconsciously adjusts their strategy, effectively “learning” to control the interface more efficiently over time. This biofeedback mechanism is what allows for the gradual refinement of control speed and accuracy.

B. The Pioneers and Their Paradigm-Shifting Technologies

The field is no longer confined to academic labs. Several key players and public demonstrations have brought brain-interface typing into the global spotlight.

-

The Stentrode by Synchron: This company has pioneered a minimally invasive approach. Their Stentrode device, about the size of a paperclip, is implanted into a blood vessel next to the motor cortex via a catheter, avoiding the need for open-brain surgery. It then records motor intent signals, allowing users to control external devices. Human trials have demonstrated the ability to send text messages and emails through direct thought.

-

Neuralink’s Ambitious Vision: While more secretive, Neuralink has captured the public imagination with its ambitions. Their approach involves a sophisticated robotic surgeon placing ultra-thin, flexible threads packed with electrodes directly into the brain. The goal is to achieve an unprecedented number of connection points, potentially enabling typing speeds that rival or exceed traditional methods.

-

The P300 Speller: A Foundational Classic: Developed by Professor Larry Farwell and colleagues, this system is a cornerstone of non-invasive BCI typing. It presents a grid of letters and symbols that flash in random sequences. The user focuses on the desired character, and when it flashes, it elicits a reliable P300 response that the computer detects. While slower than invasive methods, it has provided a lifeline for communication for many locked-in patients.

C. The Uncharted Territory: Ethical and Societal Implications

As with any transformative technology, the rise of brain-interface typing brings a host of profound ethical questions that society must grapple with before widespread adoption.

-

C.1. The Ultimate Privacy Frontier: Mental Integrity

The most immediate concern is the sanctity of our private thoughts. A device that reads neural signals to type could, in theory, be manipulated to access information we do not consciously wish to share. How do we protect “neural data” from being mined, sold, or used against us? The concept of “cognitive liberty” the right to self-determination over our own brains and mental experiences must be legally defined and fiercely protected. -

C.2. The Neuro-Divide: A New Form of Inequality

Initially, this technology will be expensive. This creates the risk of a “neuro-divide,” where a privileged class gains access to enhanced cognitive communication and control, while the rest of society is left with their biological limitations. This could exacerbate existing social and economic inequalities in unprecedented ways, from academic and professional performance to social interaction. -

C.3. Identity, Agency, and the Blurred Self

If our thoughts can directly manipulate the external world, where does our “self” end and the “tool” begin? The seamless integration of a BCI could lead to changes in personality, cognition, and sense of agency. Furthermore, the potential for hacking or malicious manipulation of these devices poses a direct threat to an individual’s autonomy and sense of self. -

C.4. The Redefinition of Human Communication

What happens to nuance, empathy, and the art of conversation when communication becomes a pure, unfiltered stream of thought? The deliberative process of speaking or writing allows for reflection and editing. Direct thought-transfer might lead to more honest, but also more raw and potentially damaging, interactions. It could also render traditional forms of communication, like handwriting or oration, obsolete for certain tasks.

D. Beyond Text: The Expansive Future of Neural Interfaces

While typing is the most immediate application, it is merely the tip of the iceberg. The underlying technology of decoding neural intent has far-reaching implications.

-

D.1. Revolutionizing Assistive Technology and Medicine

The impact on individuals with severe disabilities is the most compelling and humane application. For people with ALS, spinal cord injuries, or locked-in syndrome, BCIs can restore not just communication, but also control over their environment manipulating robotic arms, driving wheelchairs, and interacting with smart home systems, thereby restoring a profound degree of independence and dignity. -

D.2. The Next Generation of Human-Computer Interaction (HCI)

In the future, we may navigate operating systems, design 3D models, or play immersive video games using only our thoughts. This could lead to user interfaces that are fundamentally intuitive, adapting to our cognitive state and intentions in real-time, making our interaction with technology more fluid and efficient than ever before. -

D.3. The Emergence of Collaborative Consciousness and Telepathy

Perhaps the most science-fiction-like prospect is the potential for direct brain-to-brain communication. While in its infancy, research has demonstrated basic “thought transmission” between individuals. This could eventually evolve into a form of digital telepathy, allowing for the sharing of complex ideas, emotions, and sensory experiences, fundamentally altering the nature of collaboration, education, and human relationships. -

D.4. Merging with Artificial Intelligence

The ultimate symbiosis would be a closed-loop system where the BCI not only reads our intentions but also writes information back into our brain. Imagine having instantaneous access to a vast digital database or computational power as an integrated part of your thought process. This concept of a “human-AI hybrid” is the long-term horizon for this technology, promising to amplify human intelligence in ways we can barely conceive.

Conclusion: Navigating the New Neural Epoch

Brain-interface typing is far more than a novel gadget; it is the vanguard of a fundamental shift in the human experience. It represents the final dismantling of the physical barrier that has long separated the boundless world of our thoughts from the tangible world of action and communication. As we stand at this precipice, the path forward is fraught with both immense promise and profound peril. The technology holds the key to liberating those trapped by physical limitations and augmenting human capabilities to unprecedented levels. Yet, it simultaneously forces us to confront deep ethical questions about privacy, equality, and the very nature of our identity.

The success of this digital revolution will not be measured solely in words-per-minute typed, but in our collective wisdom to guide its development with caution, empathy, and a steadfast commitment to human values. The future of communication is not just digital; it is intrinsically, and irrevocably, neural. We are not just building tools to type with our minds; we are learning to weave the very fabric of our consciousness into the digital tapestry of the world.